Long story short, entropy is mostly (if not all) about redistributing the available energy until equilibria for all describable systems are reached. On the contrary, one can find the number of balls being equal pretty plausible and ordered. As you will need a definition of order to do that. However, the fact that you need more information doesn't imply anything about disorder. Hence, Gibbs entropy is the physical analogue of Shannon entropy. In other words, the information you need to describe this state is more than the information you will need to describe any other state. In addition, this macrostate has the maximum amount information this system can have.

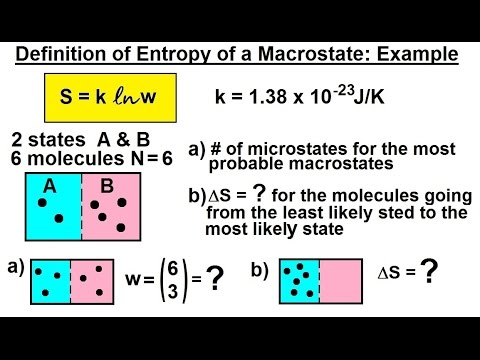

So, if this system is ergodic it will spend most of its time visiting microstates of the mentioned macrostate. If $N_1 = N_2$, then you can make the maximum number of combinations when distributing them to $N$ boxes. Another way to understand this without doing any calculation is to look at the macrostate that has the most microstates or has the highest degeneracy. The partition function is now calculated as: $$Z=\int.\int e^$ because this macrostate is the most probable one and corresponds to maximum entropy. We assume that the system under consideration depends upon some $f$ generalized coordinates and momenta: $$E(q_1.q_f, p_1.p_f)$$ and thus phase space is divided into volume elements of $h_0^f$, where this 'size' remains undefined. You have to subdivide this space into volume elements in order to do any type of counting. In a classical system, you are working in phase space. Not sure if this is what you are looking for, but I'll give it a go. But feel free to ignore this one if you think it would be an useless repetition of the answer I linked. This is sort of a bonus question because I founded this previous question tackling the same problem but I think that the answer provided there is rather poorly made and excessively concise, and also I think it would be nice to complete this picture all in one question. What if this is not the case? What if we want to statistically define entropy in a classical, non quantum scenario? How we define $\Gamma$ in the classical phase space of position and momentum? Statistical entropy can only be defined quantum mechanically?įragmentation between Classical Thermodynamics and Statistical Mechanics: How do we show that the first two definition are compatible with the third? This seems challenging, expecialy because the third definition appears to be really vague.

So you can see for yourself that, at least as a first glance, the definition of entropy is quite fragmented and confusing.įortunately we can easly unify the the first and second definition by invoking the second fundamental postulate of Statistical Mechanics: the Principle of Indifference, it tells us that:Īnd then with a little bit of work we can easily show that the two definitions are equivalent.īut, even with this improvement, the picture remains fragmented, for mainly two distinct problems:įragmentation between Classical and Quantum Mechanics: How do we count the number of possible microstates $\Gamma$ in a non-quantum, classical, perspective? What I mean is: We sort of taken for granted, in the first two definitions, the fact that the number of possible compatible microstates is finite, but of course this can only be true in a quantum scenario, by hoping that the quantum phase space of position and momentum, is quantised. Where $T$ is the temperature of the system in which the thermodynamical process is occurring and $dQ$ is the infinitesimal amount of heat being poured into the system in a reversible way. We completely change the setup: consider a reversible cyclical thermodynamical process then we simply define entropy as a generic function of the state variables (so as a state function) that has the following property:

Where the $p_i$ is the probability of the system being in the $i$-th microstate (again, taken from the pool of compatible microstates). Same setup as before, but now the Entropy is defined as: Where $k_B$ is just the Boltzmann Constant and $\Gamma$ is the number of possible microstates that are compatible with the macrostate in which the system is. Then the entropy $S$ is defined as the following quantity: In the context of Thermodynamics and Statistical Mechanics we encounter, basically, three different definition of entropy:Ĭonsider an isolated macroscopic system, it has a macrostate and a microstate the macrostate is the collection of its macroscopic properties and the microstate is the precise condition in which the constituent parts of the system are into.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed